[ad_1]

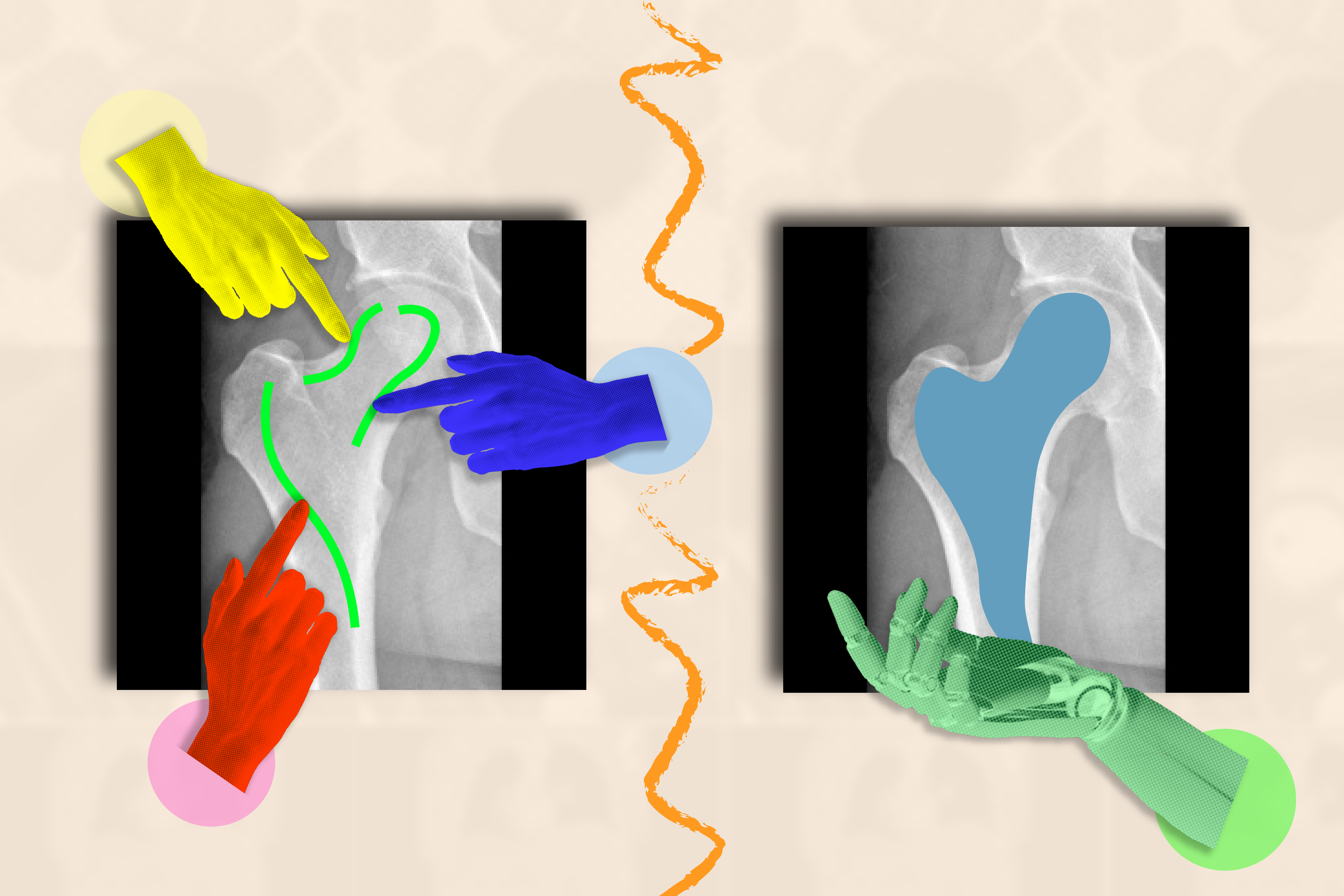

To the untrained eye, a medical picture like an MRI or X-ray seems to be a murky assortment of black-and-white blobs. It may be a wrestle to decipher the place one construction (like a tumor) ends and one other begins.

When skilled to know the boundaries of organic buildings, AI techniques can phase (or delineate) areas of curiosity that medical doctors and biomedical staff need to monitor for illnesses and different abnormalities. As a substitute of shedding treasured time tracing anatomy by hand throughout many pictures, a synthetic assistant may try this for them.

The catch? Researchers and clinicians should label numerous pictures to coach their AI system earlier than it could precisely phase. For instance, you’d must annotate the cerebral cortex in quite a few MRI scans to coach a supervised mannequin to know how the cortex’s form can fluctuate in numerous brains.

Sidestepping such tedious information assortment, researchers from MIT’s Laptop Science and Synthetic Intelligence Laboratory (CSAIL), Massachusetts Normal Hospital (MGH), and Harvard Medical Faculty have developed the interactive “ScribblePrompt” framework: a versatile software that may assist quickly phase any medical picture, even varieties it hasn’t seen earlier than.

As a substitute of getting people mark up every image manually, the crew simulated how customers would annotate over 50,000 scans, together with MRIs, ultrasounds, and pictures, throughout buildings within the eyes, cells, brains, bones, pores and skin, and extra. To label all these scans, the crew used algorithms to simulate how people would scribble and click on on totally different areas in medical pictures. Along with generally labeled areas, the crew additionally used superpixel algorithms, which discover components of the picture with related values, to determine potential new areas of curiosity to medical researchers and practice ScribblePrompt to phase them. This artificial information ready ScribblePrompt to deal with real-world segmentation requests from customers.

“AI has vital potential in analyzing pictures and different high-dimensional information to assist people do issues extra productively,” says MIT PhD scholar Hallee Wong SM ’22, the lead creator on a brand new paper about ScribblePrompt and a CSAIL affiliate. “We need to increase, not change, the efforts of medical staff by means of an interactive system. ScribblePrompt is a straightforward mannequin with the effectivity to assist medical doctors give attention to the extra attention-grabbing components of their evaluation. It’s sooner and extra correct than comparable interactive segmentation strategies, decreasing annotation time by 28 p.c in comparison with Meta’s Section Something Mannequin (SAM) framework, for instance.”

ScribblePrompt’s interface is easy: Customers can scribble throughout the tough space they’d like segmented, or click on on it, and the software will spotlight the whole construction or background as requested. For instance, you possibly can click on on particular person veins inside a retinal (eye) scan. ScribblePrompt may also mark up a construction given a bounding field.

Then, the software could make corrections based mostly on the consumer’s suggestions. In the event you needed to focus on a kidney in an ultrasound, you would use a bounding field, after which scribble in further components of the construction if ScribblePrompt missed any edges. In the event you needed to edit your phase, you would use a “damaging scribble” to exclude sure areas.

These self-correcting, interactive capabilities made ScribblePrompt the popular software amongst neuroimaging researchers at MGH in a consumer research. 93.8 p.c of those customers favored the MIT method over the SAM baseline in bettering its segments in response to scribble corrections. As for click-based edits, 87.5 p.c of the medical researchers most well-liked ScribblePrompt.

ScribblePrompt was skilled on simulated scribbles and clicks on 54,000 pictures throughout 65 datasets, that includes scans of the eyes, thorax, backbone, cells, pores and skin, belly muscle tissue, neck, mind, bones, tooth, and lesions. The mannequin familiarized itself with 16 kinds of medical pictures, together with microscopies, CT scans, X-rays, MRIs, ultrasounds, and pictures.

“Many present strategies do not reply properly when customers scribble throughout pictures as a result of it’s exhausting to simulate such interactions in coaching. For ScribblePrompt, we had been capable of power our mannequin to concentrate to totally different inputs utilizing our artificial segmentation duties,” says Wong. “We needed to coach what’s primarily a basis mannequin on a whole lot of numerous information so it will generalize to new kinds of pictures and duties.”

After taking in a lot information, the crew evaluated ScribblePrompt throughout 12 new datasets. Though it hadn’t seen these pictures earlier than, it outperformed 4 present strategies by segmenting extra effectively and giving extra correct predictions concerning the actual areas customers needed highlighted.

“Segmentation is essentially the most prevalent biomedical picture evaluation process, carried out extensively each in routine scientific follow and in analysis — which results in it being each very numerous and a vital, impactful step,” says senior creator Adrian Dalca SM ’12, PhD ’16, CSAIL analysis scientist and assistant professor at MGH and Harvard Medical Faculty. “ScribblePrompt was fastidiously designed to be virtually helpful to clinicians and researchers, and therefore to considerably make this step a lot, a lot sooner.”

“Nearly all of segmentation algorithms which have been developed in picture evaluation and machine studying are at the least to some extent based mostly on our means to manually annotate pictures,” says Harvard Medical Faculty professor in radiology and MGH neuroscientist Bruce Fischl, who was not concerned within the paper. “The issue is dramatically worse in medical imaging through which our ‘pictures’ are usually 3D volumes, as human beings don’t have any evolutionary or phenomenological motive to have any competency in annotating 3D pictures. ScribblePrompt permits guide annotation to be carried out a lot, a lot sooner and extra precisely, by coaching a community on exactly the kinds of interactions a human would usually have with a picture whereas manually annotating. The result’s an intuitive interface that permits annotators to naturally work together with imaging information with far better productiveness than was beforehand doable.”

Wong and Dalca wrote the paper with two different CSAIL associates: John Guttag, the Dugald C. Jackson Professor of EECS at MIT and CSAIL principal investigator; and MIT PhD scholar Marianne Rakic SM ’22. Their work was supported, partly, by Quanta Laptop Inc., the Eric and Wendy Schmidt Middle on the Broad Institute, the Wistron Corp., and the Nationwide Institute of Biomedical Imaging and Bioengineering of the Nationwide Institutes of Well being, with {hardware} help from the Massachusetts Life Sciences Middle.

Wong and her colleagues’ work can be offered on the 2024 European Convention on Laptop Imaginative and prescient and was offered as an oral speak on the DCAMI workshop on the Laptop Imaginative and prescient and Sample Recognition Convention earlier this 12 months. They had been awarded the Bench-to-Bedside Paper Award on the workshop for ScribblePrompt’s potential scientific influence.

[ad_2]

Source link